January 05, 2021

2886

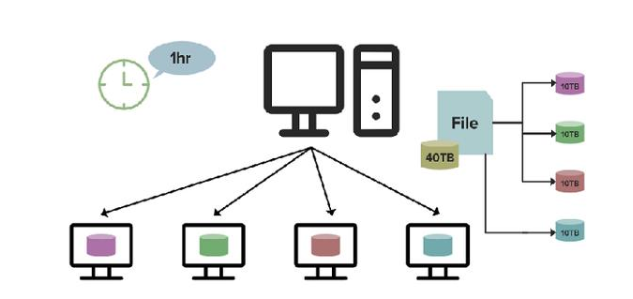

HDFS, which is responsible for distributed storage in Hadoop, is defined as a distributed file system. It provides efficient, fault-tolerant, and scalable data storage for the data entering the platform, which benefits from an important feature of the distributed file system -Scalability. Today’s big data development and sharing, let’s talk about the scalability design of the distributed file system. In the distributed file system, the master-slave architecture design makes the scalability also based on the two levels of storage nodes and central nodes.

1. Scaling of storage nodes

When a new storage node is added to the cluster, it will actively register with the central node and provide its own information. When subsequent files are created or data blocks are added to existing files, the central node can be assigned to this new node ,easier. But it also needs to solve some corresponding problems.

1) How to make the load of each storage node relatively balanced?

First, there must be indicators to evaluate the load of storage nodes. It can be considered from the disk space usage, or comprehensive judgment can be made from the disk usage + CPU usage + network traffic. Generally speaking, disk usage is the core indicator. Secondly, when allocating new space, priority is given to storage nodes with low resource usage. For existing storage nodes, if the load is overloaded or the resource usage is unbalanced, data migration is required.

2) How to ensure that the newly added nodes will not collapse due to excessive short-term load pressure?

When the system finds that a new storage node is currently added, its resource usage is obviously the lowest. Then all write traffic is routed to this storage node, which may cause the short-term load of this new node to be excessive. Therefore, during resource allocation, a warm-up time is required. Within a period of time, the write pressure is slowly routed until a new equilibrium is reached.

3) If data migration is required, how to make it transparent to the business layer?

In the case of a central node, this work is relatively easy to do, and the central node will take care of it-determine which storage node is under pressure, determine which files to migrate to where, update your own meta information, and write during the migration process What to do if it is renamed or renamed, there is no need for upper-level applications to process it. If there is no central node, the cost is relatively high. In the overall design of the system, this situation must also be taken into consideration. For example, ceph has to adopt a two-layer structure of logical location and physical location. The logical layer (pool and placegroup), this is unchanged during the migration process, and the movement of the lower physical layer data block is only the address of the physical block referenced by the logical layer has changed. From the Client's perspective, the location of the logical block will not change .

2. Scaling of the central node

If there is a central node, also consider its scalability. Since the central node is the control center, it is in the master-slave mode, so the scalability is relatively large, and there is an upper limit, which cannot exceed the scale of a single physical machine. We can consider various means to raise this upper limit as much as possible. There are several ways to consider: store files in the form of large data blocks-for example, the size of HDFS data block is 64M, and the size of ceph data block is 4M, which far exceeds the 4k of a stand-alone file system. Its significance is to greatly reduce the amount of metadata, so that the single-machine memory of the central node can support enough disk space meta information.

The central node adopts a multi-level approach-the top-level central node only stores the metadata of the directory, which specifies which sub-master control node to find the files in a certain directory, and then finds the real storage node of the file through the sub-master control node ; Central nodes share storage devices-deploy multiple central nodes, but they share the same storage peripheral/database, meta information is stored here, the central node itself is stateless. In this mode, the request processing capability of the central node is greatly enhanced, but the performance will be affected to a certain extent. About the development of big data, the scalability design of the distributed file system, the above is a brief introduction for everyone. Regarding scalability, this is one of the important indicators that affect the performance of a distributed file system. At the architectural level, you need to make a good idea.