May 05, 2021

2418

Arm recently released the product details of the Arm® Neoverse V1 and N2 platforms, both of which meet the various needs of infrastructure applications. These two platforms are designed to solve various workloads and application problems currently running. Compared with the previous generation N1, they bring 50% and 40% performance improvements, respectively. In addition, Arm also released CMN-700 as a key component for building high-performance SoCs based on Neoverse V1 and N2 platforms.

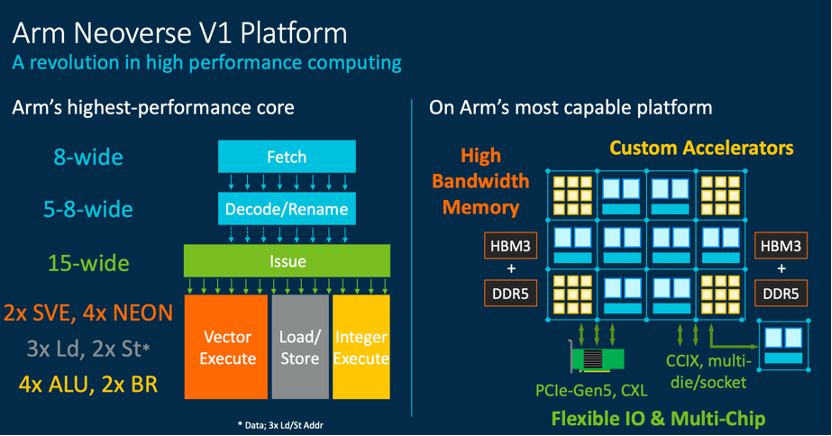

Neoverse V1: The widest micro-architecture + SVE vector operation.

Neoverse V1 platform / Arm

Compared with the previous generation N1, Neoverse V1 brings 50% performance improvement, 1.8 times vector workload optimization, and 4 times machine learning workload optimization. Thanks to Arm's widest micro-architecture and SVE function so far, Neoverse V1 can accommodate more instructions in operation, prolong the code lifetime, and also provide flexibility for chip designers. Arm’s existing SIMD instruction set NEON is difficult to vectorize certain codes, while SVE can directly take the same code and automatically vectorize it well. Compared with NEON, SVE can increase the processing speed. Nearly 3.5 times.

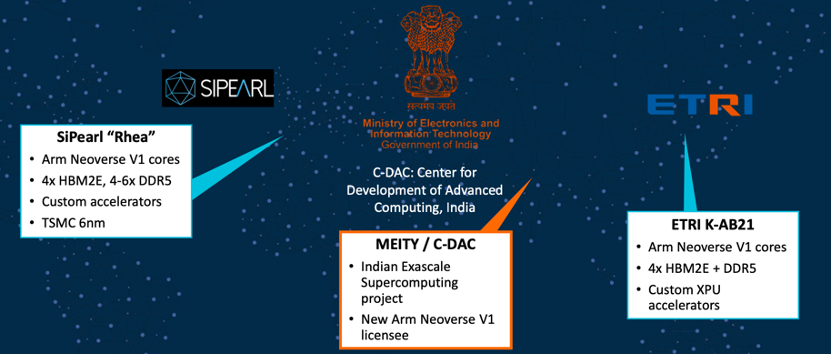

HPC project that has used Neoverse V1 / Arm

At present, French chip company SiPearl, India's Ministry of Information Technology (MEITY) and South Korea's Electronics and Telecommunications Research Institute (ETRI) have all used Neoverse V1 in their HPC projects.

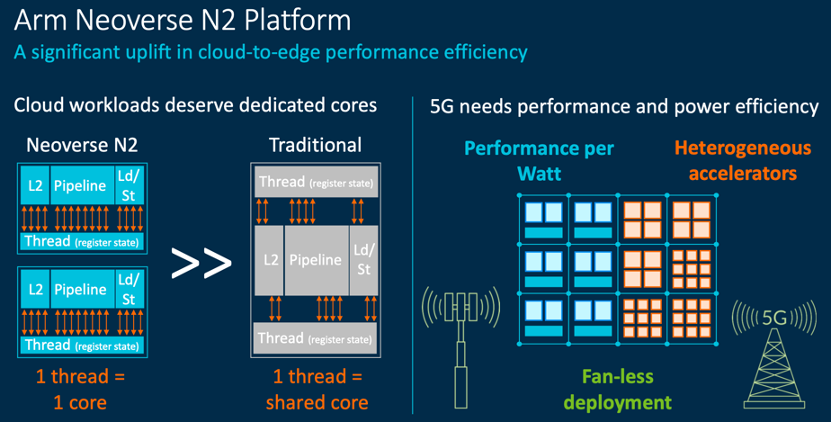

Neoverse N2: The first Armv9+SVE2 platform.

Neoverse N2 improves cloud to edge performance efficiency / Arm

Arm released the Armv9 architecture a few weeks ago to meet the global demand for ubiquitous dedicated processing capabilities, and the newly announced Neoverse N2 platform is the first platform based on the Armv9 architecture.

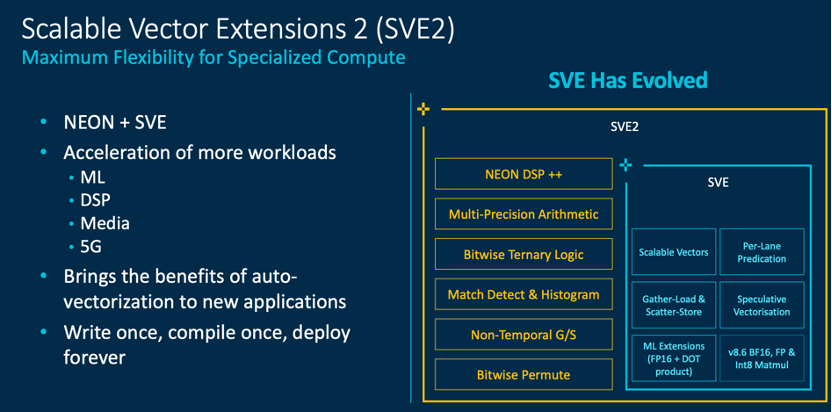

SVE2 / Arm

Compared with N1, Neoverse N2 maintains the same level of power and area efficiency, and its single-thread performance has increased by 40%. Not only that, Neoverse N2 is also the first platform with SVE2 functionality. As a superset of SVE and Neon, SVE2 brings a huge improvement in performance and efficiency from the cloud to the edge. SVE is mainly used to accelerate HPC, and SVE2 can be widely used in machine learning, digital signal processing, and 5G applications, while also having the advantages of SVE's ease of programming and portability.

CMN-700: The next generation bus empowers heterogeneous SoC.

Neoverse CMN-700 / Arm

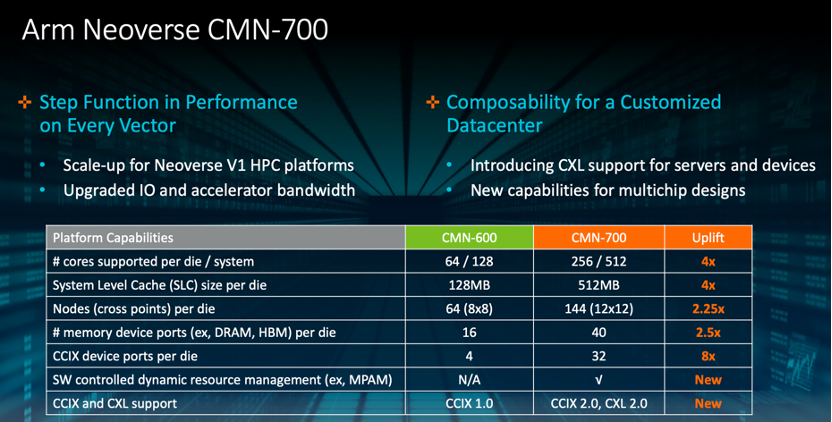

As an upgrade of the previous generation CMN-600, the maximum number of cores supported by CMN-700 can reach 512. By supporting CCIX 2.0 and CXL 2.0, it also provides customers with more customization and expansion options, and provides greater flexibility for tightly coupled heterogeneous computing.

Trends in heterogeneous computing

With the gradual development of heterogeneous computing, we have seen many trends in the combination of CPUs and GPUs. For example, Nvidia's recently announced Grace chip based on Arm Neoverse is a CPU for AI supercomputing. Nvidia uses self-developed NVLink technology for interconnection technology, not PCIE. Chris Bergey, senior vice president and general manager of the Arm Infrastructure Division, mentioned that interconnecting with diversified accelerator functions, such as AI accelerators, is critical for the future market. For example, CMN-700 already supports interconnection standards such as CXL and CCIX. In the future, Arm expects to bring more flexibility to the market and support more systems like Grace.

This heterogeneous trend also includes FPGAs. Zou Ting, senior global director of Arm Infrastructure Division, added that there are already partners that use Neoverse N2 and FPGA accelerator cards in heterogeneous computing systems. Some Arm partners also put FPGA accelerator and N2 on one chip to make SoC, and realize the flexibility of heterogeneous computing through Chiplet's technology.

Widespread use of public cloud

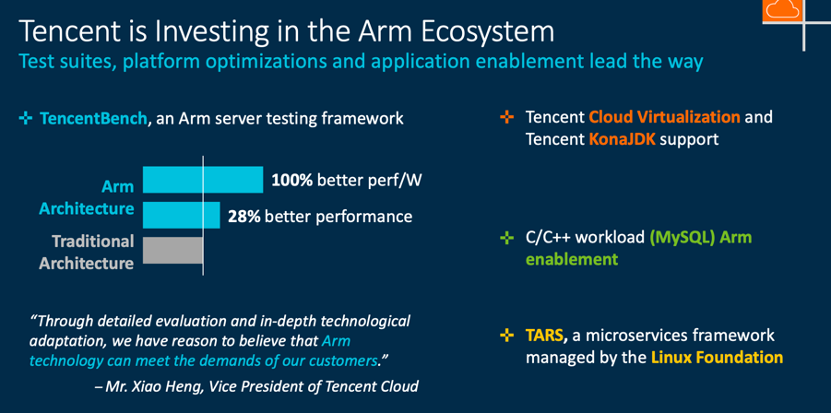

Tencent Cloud adds to the Arm ecosystem / Arm Tencent Cloud

The wide application of Neoverse is especially obvious among public cloud vendors, such as AWS, Alibaba Cloud, and Tencent Cloud. Huang Wenxin, director of Tencent's special testing technology center, mentioned that Tencent and Arm formally signed a cooperation agreement last year, hoping to accelerate the evaluation and adaptation of Arm Neoverse technology through cooperation. Through the TencentBench test framework, it is found that thanks to more scalable CPU cores, the Arm server performs better than traditional servers, especially in the areas of AI inference and image processing.

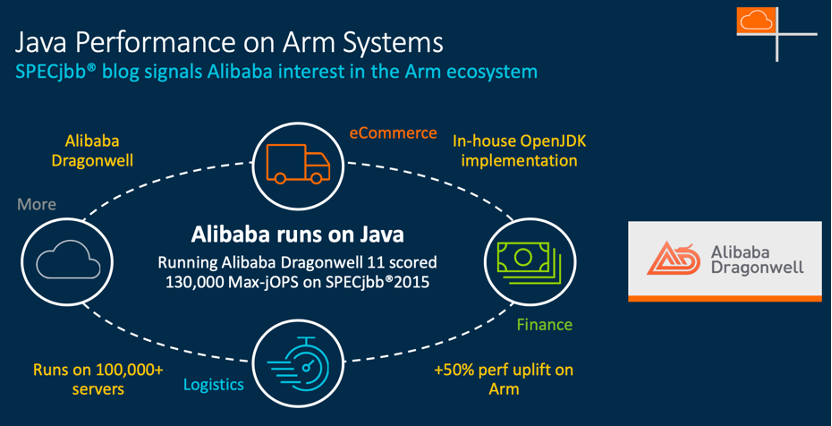

Arm architecture improves Java performance / Arm Alibaba Cloud

Alibaba chief engineer Kingsum Chow said: In terms of Arm’s CPU resources, there will be two considerations in our existing software. One is that some of our software needs to be recompiled, and the other does not need to be recompiled. To compile, we only need to run Java applications on the JVM (Java Virtual Machine). In this regard, a year ago, we worked with Arm employees to improve the performance of the JVM. In the past year, we have increased the performance of some of our existing Java applications by 50% from JDK8 to JDK11, through OpenJDK, and through Alibaba Dragonwell (a release of OpenJDK).