July 20, 2021

3417

The ever-increasing model size poses challenges to existing architectures

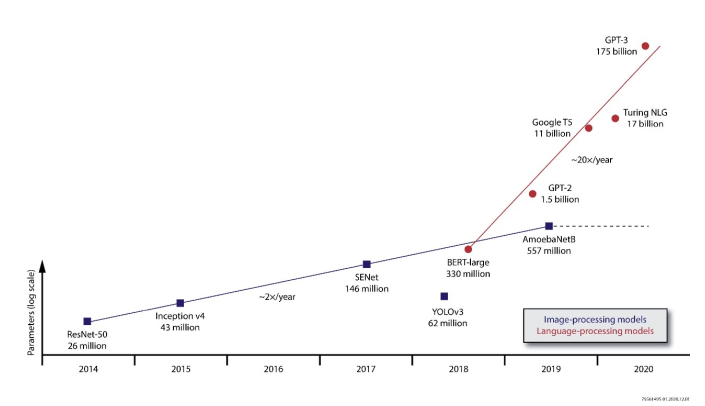

The demand for computing power in deep learning is growing at an alarming rate, and its development rate in recent years has been shortened from doubling every year to doubling every three months. The continuous increase in the capacity of deep neural network (DNN) models indicates that various fields from natural language processing to image processing have been improved-deep neural networks are key technologies for real-time applications such as autonomous driving and robotics. For example, Facebook’s research shows that the ratio of accuracy to model size increases linearly. By training on a larger data set, the accuracy can even be further improved.

At present, in many frontier fields, the growth rate of model size is much faster than Moore's Law, and trillion-parameter models for some applications are under consideration. Although few production systems will reach the same extreme conditions, in these examples, the impact of the number of parameters on performance will have a ripple effect in actual applications. The increase in model size poses challenges for implementers. If you cannot completely rely on the chip expansion roadmap, other solutions are needed to meet the demand for the increased part of the model capacity, and the cost must be adapted to the scale of deployment. This growth requires the use of customized architectures to maximize the performance of each available transistor.

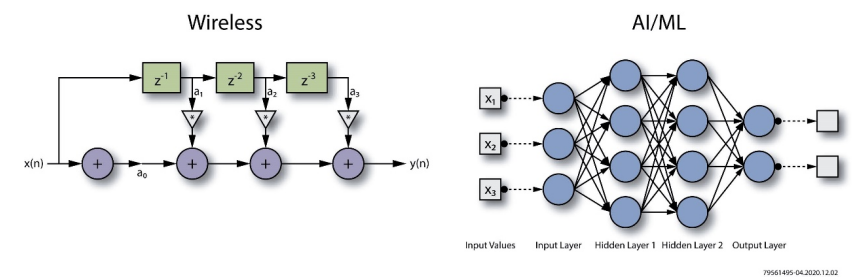

With the rapid growth of the number of parameters, the deep learning architecture is also evolving rapidly. While deep neural networks continue to use a combination of traditional convolutions, fully connected layers, and pooling layers extensively, other structures have also appeared on the market, such as self-attention networks in natural language processing (NLP). They still require high-speed matrices and tensor-oriented algorithms, but changes in storage access patterns may cause problems for graphics processing units (GPUs) and current existing accelerators.

Structural changes mean that the relevance of commonly used indicators such as trillion operations per second (TOps) is decreasing. Normally, the processing engine cannot reach its peak TOps score because the storage and data transmission infrastructure cannot provide sufficient throughput without changing the processing method of the model. For example, batch processing of input samples is a common method because it can often increase the parallelism available on many architectures. However, batch processing increases the response delay, which is generally unacceptable in real-time inference applications.

Numerical flexibility is a way to achieve high throughput

One way to improve inference performance is to adapt the numerical resolution of the calculation to the needs of each independent layer, which also represents adapting to the rapid evolution of the architecture. Generally speaking, compared with the accuracy required for training, many deep learning models can accept significant loss of accuracy and increased quantization errors during inference, and training is usually performed using standard or double-precision floating-point algorithms. These formats can support high-precision values in a very wide dynamic range. This feature is very important in training, because the backpropagation algorithm commonly used in training requires subtle changes to many weights in each pass to ensure convergence.

Generally speaking, floating-point operations require a lot of hardware support to achieve low-latency processing of high-resolution data types. They were originally developed to support scientific applications on high-performance computers. The overhead required to fully support it is not a major issue. problem.

Many inference deployments convert models to use fixed-point arithmetic operations, which greatly reduces accuracy. In these cases, the impact on accuracy is usually minimal. In fact, some layers can be converted to use an extremely limited range of values, and even binary or ternary values are also viable options.

However, integer arithmetic is not always an effective solution. Some filters and data layers require high dynamic range. To meet this requirement, integer hardware may need to process data with 24-bit or 32-bit word lengths, which consumes more resources than 8-bit or 16-bit integer data types, which can easily be used in a typical single instruction Supported in multiple data (SIMD) accelerators.

A compromise is to use a narrow floating-point format, such as a format suitable for a 16-bit word length. This choice can achieve greater parallelism, but it does not overcome the inherent performance barriers of most floating-point data types. The problem is that after each calculation, the two parts of the floating-point format need to be adjusted because the most significant bit of the mantissa is not explicitly stored. Therefore, the size of the exponent needs to be adjusted through a series of logical shift operations to ensure that the implicit leading "1" always exists. The advantage of this normalized operation is that any single value has only one representation, which is very important for software compatibility in user applications. However, for many signal processing and artificial intelligence inference routine operations, this is unnecessary.

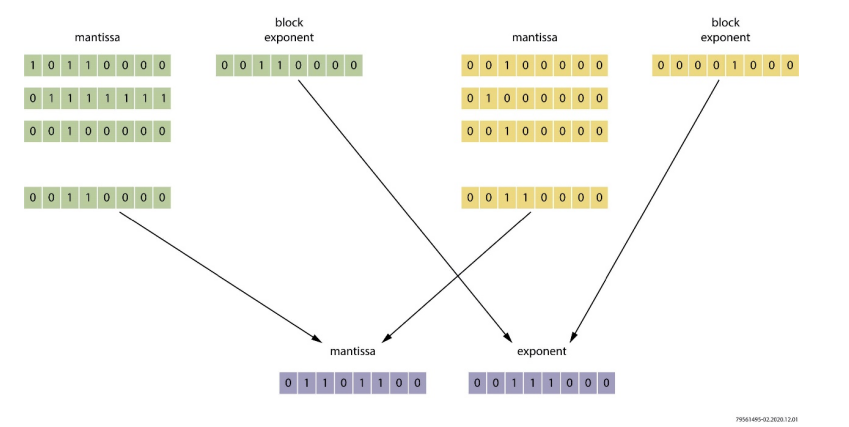

Most of the hardware overhead of these operations can be avoided by not having to normalize the mantissa and adjust the exponent after each calculation. This is the method used by the block floating point algorithm. This data format has been used in standard fixed-point digital signal processing (DSP) to improve its use in mobile device audio processing algorithms, digital subscriber line (DSL) modems, and radar systems. Performance.

Using block floating point arithmetic, there is no need to align the mantissa to the left. Data elements used in a series of calculations can share the same index. This change simplifies the design of the execution channel. The loss of precision caused by rounding values occupying similar dynamic range can be minimized. It is necessary to select an appropriate range for each calculation block during design. After the calculation block is complete, the exit function can round and normalize the values so that they can be used as regular floating-point values when needed.

Supporting the block floating point format is one of the functions of the machine learning processor (MLP). Achronix's Speedster®7t FPGA devices and Speedcore™ eFPGA architecture provide this highly flexible arithmetic logic unit. The machine learning processor is optimized for dot products and similar matrix operations required for artificial intelligence applications. Compared to traditional floating point, these machine learning processors provide substantial improvements in block floating point support. The throughput of 16-bit block floating-point operations is 8 times that of traditional half-precision floating-point operations, making it as fast as 8-bit integer operations. Compared with operations in integer form only, the active power consumption is only increased by 15 %.

Another data type that may be important is the TensorFloat 32 (TF32) format. Compared with the standard precision format, the precision of this format is reduced, but the dynamic range is maintained. TF32 also lacks optimized throughput for block index processing, but it is useful for some applications where the ease of portability of models created using TensorFlow and similar environments is very important. The high flexibility of the machine learning processor in Speedster7t FPGA makes it possible to use 24-bit floating point mode to process TF32 algorithms. In addition, the high configurability of the machine learning processor means that a new, block floating-point version of TF32 can be supported, where four samples share the same index. The block floating point TF32 supported by the machine learning processor is twice as dense as the traditional TF32.

Optimized algorithm support for processing flexibility

Although the machine learning processor can support multiple data types, which is essential for inference applications, its powerful functions can only be released when it becomes a part of the FPGA architecture. The ability to easily define different interconnect structures makes FPGAs stand out from most architectures. The ability to simultaneously define interconnect and arithmetic logic in the FPGA simplifies the process of building a balanced architecture. Designers can not only build direct support for custom data types, but also define the most appropriate interconnection structure to transfer data to and from the processing engine. The reprogrammable feature further provides the ability to cope with the rapid evolution of artificial intelligence. It is easy to support the change of data flow in the custom layer by modifying the logic of FPGA.

One of the main advantages of FPGA is that it can easily switch between the optimized embedded computing engine and the programmable logic implemented by the look-up table unit. Some functions can be well mapped to the embedded computing engine, such as Speedster7t MLP. For another example, a higher-precision algorithm is best assigned to a machine learning processor (MLP), because the increased bit width will cause the size of the functional unit to increase exponentially, and these functional units are used to implement functions such as high-speed multiplication.

Lower-precision integer arithmetic can usually be efficiently allocated to look-up tables (LUTs) commonly found in FPGA architecture. Designers can choose to use a simple bit-serial multiplier circuit to implement a logic array with high latency and high parallelism. Or, they can allocate more logic to each function by constructing structures such as carry-saving and advance-forward adders. These structures are usually used to implement low-latency multipliers. The unique LUT configuration in the Speedster7t FPGA device enhances the support for high-speed algorithms. The LUT provides an efficient mechanism for implementing Booth coding, which is an area-saving multiplication method.

"The result is that for a given bit width, the number of LUTs required to implement an integer multiplier can be halved. As issues such as privacy and security in machine learning become more and more important, the countermeasure may be to deploy a form of homomorphic encryption in the model. These protocols usually involve modes and bit-field operations that are very suitable for LUT implementation, and help consolidate FPGA's position as a future verification technology for artificial intelligence.

Data transmission is the key to throughput

In order to take full advantage of numerical customization in a machine learning environment, the surrounding architecture is equally important. In increasingly irregular graphical representations, being able to transmit data where and when needed is a key advantage of programmable hardware. However, not all FPGA architectures are the same.

One problem with traditional FPGA architectures is that they evolved from early applications; but in early applications, their main function is to implement interfaces and control circuit logic. Over time, as these devices provide cellular mobile communication base station manufacturers with a way to move away from increasingly expensive ASICs, the FPGA architecture incorporates DSP modules to handle filtering and channel estimation functions. In principle, these DSP modules can all handle artificial intelligence functions. However, these modules were originally designed to process one-dimensional finite impulse response (1D FIR) filters. These filters use a relatively simple channel to transmit data through the processing unit, and a series of fixed coefficients are applied in this channel. Continuous sample stream.

The traditional processor architecture has relatively simple support for the convolutional layer, while it is more complicated for others. For example, a fully connected layer needs to apply the output of each neuron in one layer to all neurons in the next layer. As a result, the data flow between arithmetic logic units is much more complicated than in traditional DSP applications, and in the case of higher throughput, it will bring greater pressure on interconnection.

Although processing engines such as DSP cores can generate a result in each cycle, wiring limitations inside the FPGA may prevent data from being passed to it fast enough. Generally, congestion is not a problem for the 1D FIR filters commonly found in communication systems designed for many traditional FPGAs. The results produced by each filtering stage can be easily passed on to the next stage. However, the higher interconnection required for tensor operations and the lower data locality of machine learning applications make interconnection even more important for any implementation.

The data locality problem in machine learning requires attention to multi-level interconnection design. Due to the large number of parameters in the most effective models, off-chip data storage is often necessary. The key requirement is a mechanism that can transmit data with low latency when needed, and use high-efficiency scratchpad memory close to the processing engine to make the most effective use of prefetching and other strategies that use predictable access patterns to ensure that data is at the right time Available.

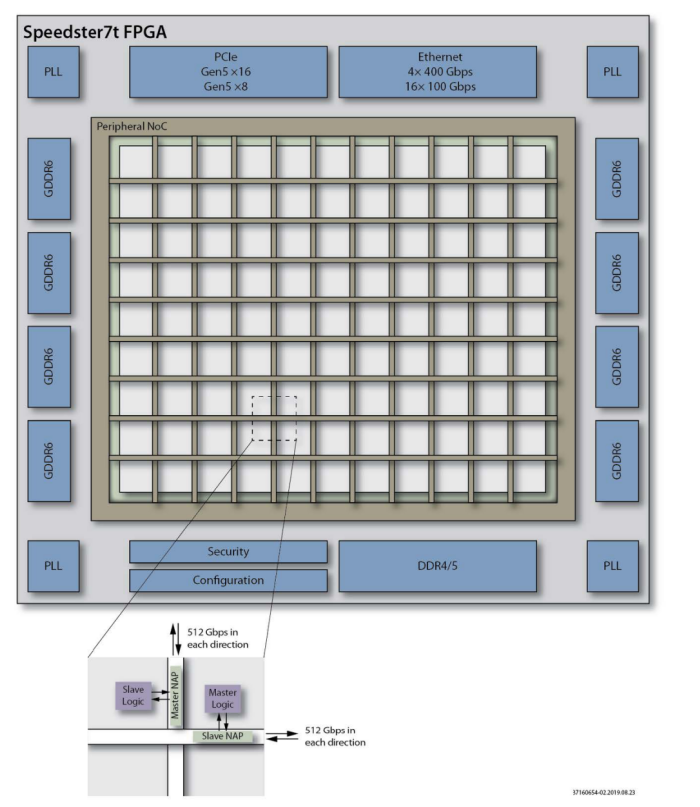

In the Speedster7t architecture, there are the following three innovations for data transmission:

·Optimized storage hierarchy

·Efficient local wiring technology

·A high-speed two-dimensional network on a chip (2D NoC) for on-chip and off-chip data transmission

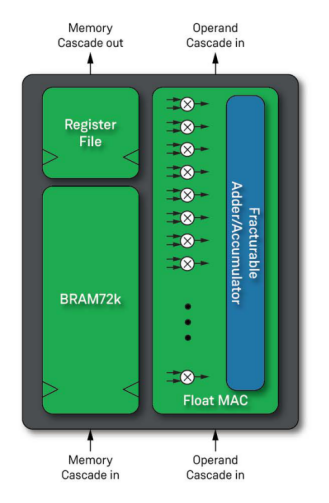

Traditional FPGAs usually have on-chip RAM blocks distributed throughout the logic architecture. These RAM blocks are placed at a certain distance from the processing engine. For a typical FPGA design, this choice is an effective architecture, but in an artificial intelligence environment, it brings additional and unnecessary wiring overhead. In the Speedster7t architecture, each machine learning processor (MLP) is associated with a 72kb dual port block RAM (BRAM72k) and a smaller 2kb dual port logic RAM (LRAM2k), where LRAM2k can be used as a tightly coupled The register file.

The machine learning processor (MLP) and its associated memory can be accessed separately through FPGA routing resources. However, if a memory is driving the associated MLP, it can use direct connections, thereby offloading FPGA routing resources and providing high-bandwidth connections.

In artificial intelligence applications, BRAM can be used as a memory to store values that are not expected to change in each cycle, such as neuron weights and activation values. LRAM is more suitable for storing temporary values with only short-term data locality, such as short channels of input samples and accumulated values for tensor shrinking and pooling activities.

The architecture takes into account the need to be able to divide large and complex layers into segments that can be operated in parallel, and provide temporary data values for each segment. Both BRAM and LRAM have a cascade connection function, which can easily support the construction of systolic arrays commonly used in machine learning accelerators.

MLP can be driven cycle by cycle from logic arrays, cascaded paths of shared data, and associated BRAM72k and LRAM2k. This arrangement can build a complex scheduling mechanism and data processing channel, so that MLP is continuously supported by data, and at the same time supports the widest possible connection mode between neurons. Continuously providing data for MLP is the key to improving effective TOps computing power.

The output of MLP has the same flexibility, capable of creating systolic arrays and more complex wiring topologies, thereby providing an optimized architecture for each type of layer that may be required in a deep learning model.

The 2D NoC in the Speedster7t architecture provides a high-bandwidth connection from the programmable logic of the logic array to the high-speed interface subsystem located in the I/O ring for connecting to off-chip resources. They include GDDR6 for high-speed storage access and on-chip interconnect protocols such as PCIe Gen5 and 400G Ethernet. This structure supports the construction of a highly parallelized architecture and a highly data-optimized accelerator based on the central FPGA.

By routing high-density data packets to hundreds of access points distributed throughout the logic array, 2D NoC makes it possible to significantly increase the available bandwidth on the FPGA. Traditional FPGAs must use thousands of individually programmed routing paths to achieve the same throughput, and doing so will eat up local interconnect resources. Gigabit data is transmitted to the local area through a network access point, 2D NoC alleviates wiring problems, while supporting easy and fast data transfer to and from MLP and LUT-based customized processors.

The related resource saving is considerable-a 2D NoC implemented with traditional FPGA soft logic has 64 NoC access points (NAP), each access point provides a 128-bit interface running at 400MHz, which will consume 390kLUT . In contrast, the hard 2D NoC in the Speedster 7t1500 device has 80 NAPs, does not consume any FPGA soft logic, and provides a higher bandwidth.

There are other advantages of using 2D NoC. As the interconnection between adjacent areas is less congested, the logic design is easier to lay out. Because there is no need to allocate resources from adjacent areas to implement the control logic of the high-bandwidth path, the design is also more regular. Another benefit is that it greatly simplifies the local reconfiguration-NAP supports a single area to become an effective independent unit, these units can be exchanged for import and export according to the needs of the application. This reconfigurable method, in turn, supports different models that need to be used at a specific time, or supports an architecture such as on-chip fine-tuning or regular retraining of models.

in conclusion

As the model increases and the structure becomes more complex, FPGA is becoming an increasingly attractive basic device to build efficient and low-latency AI reasoning solutions, and this is due to its ability to deal with a variety of numerical data types and Data-oriented function support. However, simply applying traditional FPGAs to machine learning is far from enough. The data-centric nature of machine learning requires a balanced architecture to ensure that performance is not limited by humans. Taking into account the characteristics of machine learning, and not only the present, but also in its future development needs, Achronix Speedster7t FPGA provides an ideal basic device for AI reasoning.